Last year, the creator of curl, Daniel Stenberg, made a blog post about a funny URL:

http://http://http://@http://http://?http://#http://

It’s a fun post, so give it a read. The author explains how that URL works and how different systems handle it.

One thing the post doesn’t get into is the impact of different systems handling the same URLs differently. This 2017 talk (slides, video) by Orange Tsai covers a lot more inconsistencies between different libraries and the security risks caused by those inconsistencies.

The talk covers this topic in great (and entertaining) detail, but I wanted to summarize the basics.

Parts of a URL

As both the blog post and the talk above highlight, defining a URL is tricky. There is an RFC, a WHATWG specification, and numerous inconsistent implementations.

In broad strokes, here are the parts of a URL:

scheme://username:password@host:port/path?query#fragment

scheme: The protocol used (httporhttpsfor example)username:password: Websites using the Basic authentication scheme allow you to authenticate by putting in your username and password in the URL itself. This is considered very insecure, so not a lot of websites support it.host: This is the domain or IP address you want to connect to (google.comor127.0.0.1for example).port: A host is like an apartment building and you can specifically address different apartments in the building using the port number. If the port is missing, the default for the scheme is used (80 forhttpand 443 forhttps).path: This is the specific webpage on the host. For example, this post has a path of/posts/what-is-a-url.htmlquery: These are a collection of parameters, usually in the form ofkey=valuepairs joined together by&. These are used to send the server more specific information.fragment: This is usually used to link to a specific section of the document. For example, you can jump to this section using:#parts. Note, however, the fragment is not seen by the server. It is handled (or ignored) on the client side.

Differences and Difficulty

The problem with the above definition is that it’s not clear what is and isn’t allowed in each part of the URL. The formal specs define a lot more details, but differences in interpretation remain. Particularly because the web is built on the assumption of parsing forgivingly to paper over mistakes of other systems.

I will quote some examples from the talk linked above (these examples are 6 years old so the behavior of these libraries has changed, but the outdated examples are still useful to illustrate the problem).

Query or Username

http://1.1.1.1 &@ 2.2.2.2# @3.3.3.3/

How should this URL be parsed?

- If the host is

1.1.1.1then everything after&(@ 2.2.2.2) is a query and the rest is a fragment because it’s after the#. This was the behavior of the built-in Python libraryurllib2. - If the host is

2.2.2.2then everything before the first@(1.1.1.1 &) is the username and everything after#(@3.3.3.3/) is the fragment. This was the behavior of therequestsPython library. - If the host is

3.3.3.3then everything before the second@(1.1.1.1 &@ 2.2.2.2#) is the username. This was the behavior of the built-in Python libraryurllib.

It is reasonable to see how a forgiving implementation that tries a best-effort strategy would reach any of the three conclusions. The current implementations of requests and urllib have converged on treating 1.1.1.1 &@ 2.2.2.2 as the host (urllib2 doesn’t exist in Python 3 so it is no longer maintained).

Port or Path

http://127.0.0.1:5000:80/

How should this URL be parsed?

- If the port is

5000, then the path is:80/. This was the behavior for thereadfilecall in PHP. - If the port is

80, then the host is127.0.0.1:5000. This was the behavior forparse_urlin PHP.

Host Confusion

The host field tells the system where to send the request. It’s the most important part of the URL and as such comes with a host of complexities1.

The host can be a domain like google.com, an IPv4 address like 127.0.0.1, or an IPv6 address like ::1. Both IPv4 and IPv6 have special cases and special formatting rules

that can be inconsistently supported. For example, the RFC itself highlights possible inconsistencies in parsing IPv4 addresses:

- Some implementations support fewer than 4 parts. An address with 3 parts, treats the last value as a 16-bit value (

127.0.1). An address with 2 parts, treats the last value as a 24-bit value (127.1). An address with 1 part just parses the whole value as a 32-bit integer (2130706433). - Some implementations allow each part to also be represented in decimal (

127), octal (0177), or hex (0x7F)

So depending on the implementation, http://2130706433 may or may not be treated as equal to http://127.0.0.1

Risk

Okay, sure so there are some inconsistencies, but what’s the big deal? Just don’t make weird URLs and you won’t run into edge cases.

The problem is that sometimes you have to deal with other people’s URLs. Particularly other people you don’t trust, also known as users.

Protecting Localhost

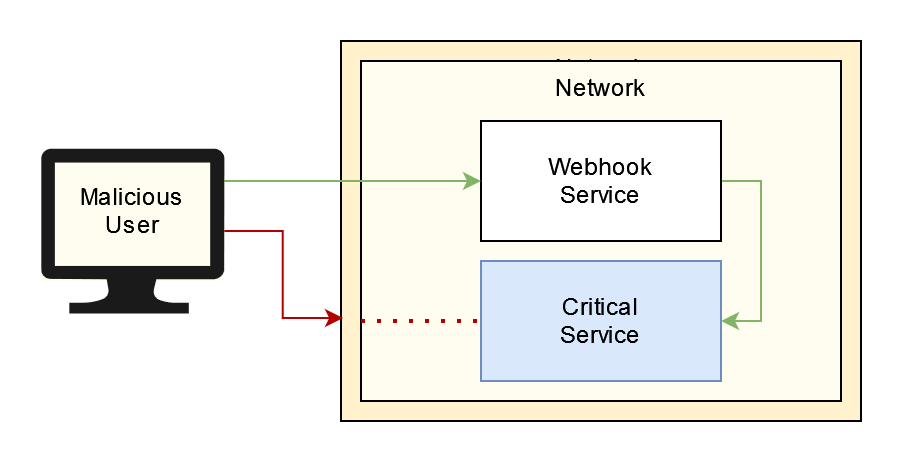

Imagine you are building a webhook system. Your users give you a URL and whenever an event occurs you send an HTTP request to that URL.

A risk with a system like this is Server-Side Request Forgery (SSRF) because the user can make you send a request where you may not want to. For example, you may have some critical service running on port 9000. Normally, users from outside the network can’t send a request to this service. But if the user sets the webhook URL to http://localhost:9000/shutdown then your webhook system will send this http request to the critical service from inside the network!

To prevent this, you might write code like this:

def call_webhook(url):

parts = urllib.parse.urlparse(url)

if isLocalHost(parts.hostname):

raise Exception("localhost is not allowed!")

requests.get(url)

How would we implement isLocalHost? Let’s start by only worrying about IP addresses. We can remember the various complexities in how IPv4 and IPv6 addresses can be represented so instead of comparing to specific strings, we convert the addresses to their decimal representation and compare the decimal values (as recommended by the RFC). This way all of 127.0.0.1, 127.0.1, and 127.1 will map to the same value: 2130706433. Then the code can look like

def isLocalHost(hostname):

if isIPv4(hostname):

decimal = int(ipaddress.IPv4Address(hostname))

return decimal == 2130706433

if isIPv6(hostname):

decimal = int(ipaddress.IPv6Address(hostname))

return decimal == 1

return False

This seems pretty good and we can pat ourselves on the back. But then a malicious user sends us this URL: http://0:9000/shutdown. As the slides point out, 0 maps to localhost on Linux! Since 0 doesn’t equal 1 or 2130706433 our validation lets the request through.

We followed the specification’s extra guidance and we still got screwed.

Allowlist of Domains

Another use case for validation of url is a domain allowlist. Suppose we are building a service that uploads daily datasets to an S3 bucket. Users can see the listing of the files in the bucket but can’t access the files themselves. They can choose which file they are interested in and send the URL for that dataset to our service. We will download the data, analyze it and send the summary back to the user.

The code for this can look something like this:

def pull_data(url):

parts = urllib.parse.urlparse(url)

hostname = parts.hostname

if hostname != "companyname.s3.amazonaws.com":

raise Exception("Only companyname bucket allowed")

data = requests.get(url, AWS_KEY_FOR_BUCKET)

return analyze(data)

Unlike the previous situation where we had a blocklist, here we have an allowlist which is generally better security. Since we only allow URLs for our bucket, we can be more confident that we aren’t sending a request to the wrong host.

However, there is still a problem. Suppose the user sends a URL like this:

http://malicious-website.com#@ companyname.s3.amazonaws.com

We are using different libraries to validate the URL and to send the HTTP request. As pointed out earlier, urllib would have said the hostname was companyname.s3.amazonaws.com but the requests library would have sent the request to malicious-website.com! To make matters worse, this request would contain the AWS API key2, allowing the attacker to have full access to our bucket!

And that’s the risk with inconsistent parsing of URLs between different libraries and systems.

Now What?

The vulnerabilities I mentioned above were found and fixed in 2016/2017. But this problem itself has not gone away.

Here is a bug from Dec 2022, in a library that requests uses, that would have sent requests for http://domain:0 to the default port: http://domain:80.

Here is a bug from May 2022 in curl that would have sent the request for http://example.com%2F10.0.0.1/ to http://example.com/10.0.0.1/.

In both those situations, our validation would be bypassed. Is the port in the URL 80? No. Is the hostname in the URL example.com? No. And yet the request would go to port 80 and domain example.com respectively.

So if this problem is ever present, what can we do? The answer to most security issues is the same: don’t trust user input. But, ideally, distrust the user input at the architecture level. For example, in the situation where the user was sending us a URL for the S3 bucket, there is no reason to accept the full URL from the user. Let the user send you some file identifier and then construct the URL in your code3.

The webhook example is much harder. The OWASP cheatsheet to prevent Server Side Request Forgery has some suggestions but even they are pretty gloomy about the webhook use-case. I think the best you can do is to isolate and unprivilege the service calling the webhooks. This way, if the service does get tricked into executing webhooks, then it doesn’t have network access to other components and even when it does, it doesn’t have the privileges to affect the system.

lol ↩︎

This only works if you are using the short-lived session tokens. If you generate request-specific signatures, it becomes much harder for the credentials to be reused. ↩︎

Of course, now the game is to validate those file identifiers! OWASP has a cheatsheet to help with input validation as well. ↩︎